Is Your Lawyer on Speed Dial?

Is Your Lawyer on Speed Dial?

Or

What do Big Data and Drug Dealing have in Common?

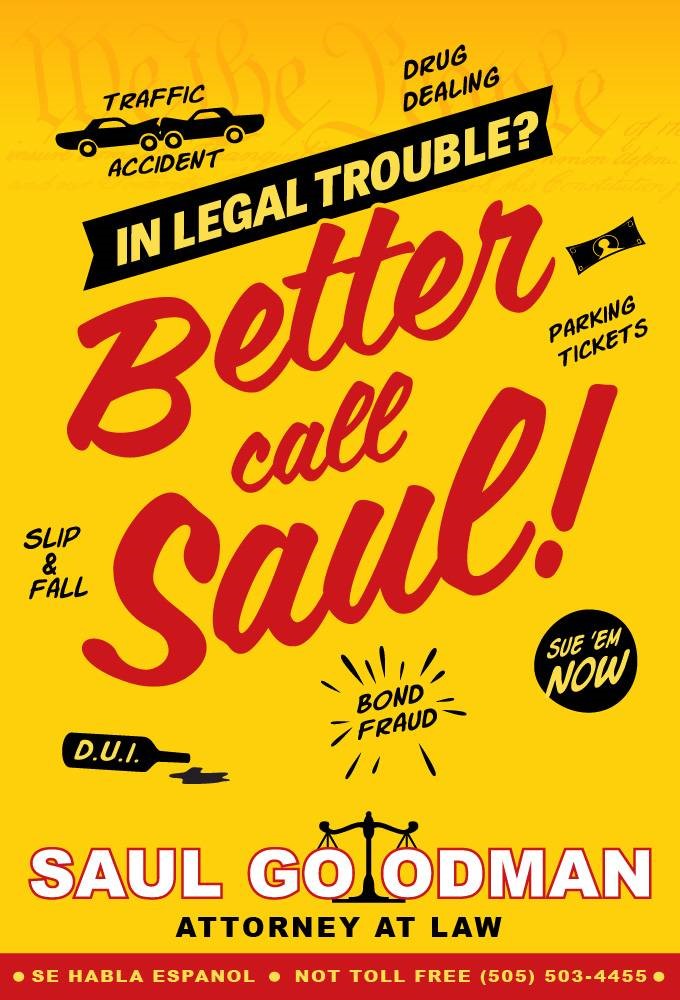

Breaking Bad fans know an attorney of Better Call Saul fame. In one episode, he tells prospective shady clients, “I’m the guy on your speed dial.”

Petty crooks and drug dealers may need this advice, but do data scientists and big data analysts need to worry?

Some suggest not; algorithms can’t be sued. A senior Google technologist is reported to have questioned the value of XAI. XAI is “Explainable” Artificial Intelligence. The argument is this; forcing AI to be explainable is too constraining. https://www.computerworld.com.au/article/621059/google-research-chief-questions-value-explainable-ai

But facts are running the other way.

In the EU, GDPR will require a great deal of algorithmic transparency if the algorithms impact citizens’ lives. In the US, the ACM has issued seven principles or, guidelines on this topic. The ACM guideline, “Principles for Algorithmic Transparency and Accountability” says, “Even well-engineered computer systems can result in unexplained outcomes or errors, either because they contain bugs or because the conditions of their use changes, invalidating assumptions on which the original analytics were based….” https://www.acm.org/binaries/content/assets/public-policy/2017_usacm_statement_algorithms.pdf

Lone Star Poll

In early 2017 Lone Star conducted polling to explore attitudes of Americans on algorithm transparency. Our respondents agreed with the ACM, not Google.

We asked about several hypothetical experiences. In every case, transparency was a benefit.

Respondents on an imaginary jury were more likely to find self-driving car company was liable when the algorithms were unexplainable.

Most of our respondents said they had a “right to know” how algorithms worked when it came to issues like Credit Ratings, Safety, Health Care, Insurance Risk Ratings, School Admissions and Investments.

A majority also said they had “rights because someone collected data about you” in a wide range of conditions; nearly every case where business and government collect data, including taxes, social media, utility smart meters and smart cars.

We’ve reported some of these findings here; https://lone-star.com/do-you-know-your-big-data-bill-of-rights/ It seems clear a backlash against intrusive, mysterious Big Data is building.

Others report similar findings.

In February, the Pew Research Center for Internet, Science and Tech released a lengthy study report, Code-Dependent: Pros and Cons of the Algorithm Age, found here; http://pewrsr.ch/2kslvuK .

Many concerns reported by Pew are echoed by a group of authors who published in Scientific American https://www.scientificamerican.com/article/will-democracy-survive-big-data-and-artificial-intelligence/

Extrapolating these results to world economy, a majority of the richest markets seem poised to exhibit resistance to black boxes in their markets, and in their courts; about two thirds of global GDP.

Our research, the ACM, GDPR, Scientific American, and Pew Research all say the same thing; “Unexplainable = Unacceptable”

It appears that like petty crooks and drug dealers, algorithm designers who insist on black box designs should find good lawyers and keep them on speed dial.

This is why Lone Star’s methods are transparent. Period. Our methods in critical industrial and corporate settings have been proven over years, driving both the top line and bottom line. Now we are applying them to IoT. Our proven methods generate a Return on Analytics faster than brute force ANN or DNN methods.

So, for powerful IoT analytics, do three things;

- Remember that in most things that matter, Unexplainable = Unacceptable

- Understand people feel they have rights to their data and how it is used

- Use Lone Star’s AnaltyicsOS for IoT

Or, ignore the market trends in two thirds of the world, and, keep your lawyer on speed dial.

About Lone Star Analysis

Lone Star Analysis enables customers to make insightful decisions faster than their competitors. We are a predictive guide bridging the gap between data and action. Prescient insights support confident decisions for customers in Oil & Gas, Transportation & Logistics, Industrial Products & Services, Aerospace & Defense, and the Public Sector.

Lone Star delivers fast time to value supporting customers planning and on-going management needs. Utilizing our TruNavigator® software platform, Lone Star brings proven modeling tools and analysis that improve customers top line, by winning more business, and improve the bottom line, by quickly enabling operational efficiency, cost reduction, and performance improvement. Our trusted AnalyticsOSSM software solutions support our customers real-time predictive analytics needs when continuous operational performance optimization, cost minimization, safety improvement, and risk reduction are important.

Headquartered in Dallas, Texas, Lone Star is found on the web at http://www.Lone-Star.com.

Recent Blog Posts

Tags In

Recent Posts

- The Power of Predictive Modeling in Complex Problem Solving featured in COTS Journal

- Lone Star Analysis Ltd. Opens New Headquarters in Lincoln

- Lone Star Analysis Launches Groundbreaking New Technology with TruNavigator MAX™

- Artificial Intelligence in 2024 – Time to Shift Gears

- How Many Piano Tuners in Lincolnshire? How Evolved AI® helps us answer seemingly impossible questions.