Fourth Unsolved Problem in Data Science and Analytics

Scalability is unsolved problem #4.

A great deal of work has been done on database scalability. We’ve moved on from Third Normal Form. For giant problems we have Hadoop. But these only address scaling our data access. When we get some large data sets with many dimensions, and we start seeing large numbers of combinations and operations. We hit the wall on computational scalability.

It’s not hard to imagine data with seven dimensions. It’s also not hard to think of some combinations of probability testing that will require a lot of calculations if we use brute force.

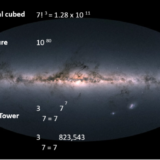

In the top equation we have the cube of 7 factorial. That number is on the order of ten to the eleventh power. That’s 100 billion. That is a lot of operations. When I see numbers like that, I ask two questions.

First, how does it compare to the number of Hydrogen atoms in the universe?

That’s about 10 to 80th power, or 100 quinvigintillion. It makes our 10^11 number look less awful. That’s our second equation. It sits on top of whole sky picture. Most of the trillions of stars you can see are made of hydrogen atoms. It’s the most common element in the universe. Think of how long it would take to count them; how many lifetimes. Asking how long it takes to do any computation at that scale is humbling.

But, another question is “are we doing something worse?”

If we aren’t careful, we’ll start building power towers. The Power Tower is also known as Tetration or superexponentiaion or hyperpower.

We see one of those in the third equation. We say it this way, “the 3rd tetration of 7”.

It’s a big big number of calculations. The number of hydrogen atoms in the universe are long gone. We are way past the universe of numbers with names. We don’t want this to happen to us.

Yet, this IS what often happens when we plunge in to high dimensional data sets. Even quantum computing is not going to solve this class of problem. And, most big data sets have high dimensional attributes; it is easy to fall into crunching at this scale It’s called the curse of dimensionality.

So, what do we do?

Well, most practitioners use dimensionality reduction in some form. When we do that, we lose all sorts of information. Clustering, PCA, all of these are lossy procedures but we don’t have a good alternative. Otherwise, in some forms of analytics… purely naive Bayes, random forests, big neural nets… all of these end up with the 10^80 comparison at some point unless we intervene with some trick like dimensional reduction.

At Lone Star, we have some techniques for reducing transmission bandwidth, computational intensity and other scalability problems. We believe our methods are breakthroughs, but no one has completely solved this problem.

Dealing with computational scalability, and without information loss, is our fourth unsolved problem.

About Lone Star Analysis

Lone Star Analysis enables customers to make insightful decisions faster than their competitors. We are a predictive guide bridging the gap between data and action. Prescient insights support confident decisions for customers in Oil & Gas, Transportation & Logistics, Industrial Products & Services, Aerospace & Defense, and the Public Sector.

Lone Star delivers fast time to value supporting customers planning and on-going management needs. Utilizing our TruNavigator® software platform, Lone Star brings proven modeling tools and analysis that improve customers top line, by winning more business, and improve the bottom line, by quickly enabling operational efficiency, cost reduction, and performance improvement. Our trusted AnalyticsOSSM software solutions support our customers real-time predictive analytics needs when continuous operational performance optimization, cost minimization, safety improvement, and risk reduction are important.

Headquartered in Dallas, Texas, Lone Star is found on the web at http://www.Lone-Star.com.

Tags In

Recent Posts

- The Power of Predictive Modeling in Complex Problem Solving featured in COTS Journal

- Lone Star Analysis Ltd. Opens New Headquarters in Lincoln

- Lone Star Analysis Launches Groundbreaking New Technology with TruNavigator MAX™

- Artificial Intelligence in 2024 – Time to Shift Gears

- How Many Piano Tuners in Lincolnshire? How Evolved AI® helps us answer seemingly impossible questions.